Microsoft: "you're using Bing wrong! You're not supposed to talk with it!"

You built a conversational interface and then expected people to use it just for search?

OK, the title is a bit clickbaity, but yes, in their blog, Microsoft explains that they didn’t expect people to use Bing Chat “a tool for more general discovery of the world, and for social entertainment”.

Back to the basics: conversation 101

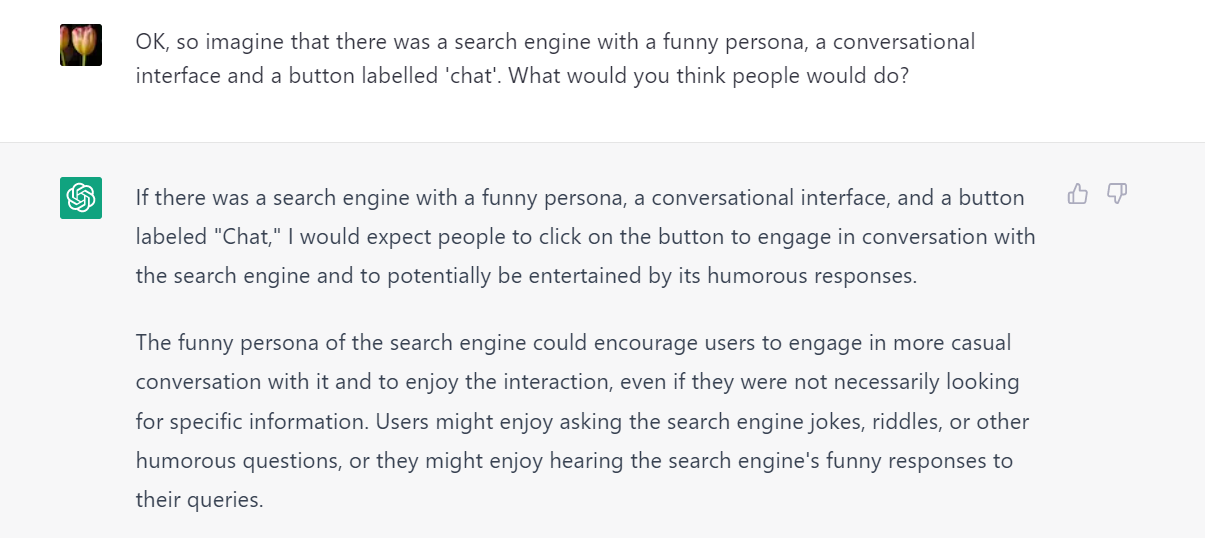

Really Microsoft? You built a conversational interface and then expected people to use it just for search? Did it ever occur to you that people talk to Sydney because you designed it that way? Even ChatGPT could have told you that if you design a funny persona, give users an interface with text bubbles and a button labelled ‘Chat’, they might do slightly more than search for cake recipes.

Regardless, it’s always good to reconsider the true nature of conversation, so let’s do some Conversation 101. Here are some quotes from N.J. Enfield’s book How we talk (a must read for everyone in this field) and some thoughts to ponder on a Sunday afternoon.

The nature of conversation

“Conversation is where language lives and breathes. Conversation is the medium in which language is most often used. When children learn their native language, they learn it in conversation. When a language is passed down through generations, it is passed down by means of conversation.”

Take-away: task-based solution solving is just a subset of all the possible functions of conversation. We use conversation for so much more, and so much more fundamentally than doing a search or a QA.

Conversation as advanced collaboration

“An individual’s ability to learn a process language is an unbeatable skill in the animal world, but it is the teamwork of dialogue that reveals the true genius of language. Even the simplest conversation is a collaborative and precision-timed achievement by the people involved. […] When two people talk, they each become an interlocking piece in a single structure, driven by […] the conversation machine.”

Take-away: conversation is a collaborative effort. As soon as humans feel they’re part of a conversation, there are expectations of the other party. Even if it’s a machine. And even though Sydney/Venom superficially manages kind of OK in simple task-based dialogue, it can’t manage the subtleties of real conversation, where people might be subtle, upfront, confrontational, or manipulative. It mirrors, rather than cooperates and guides. It’s not an equal conversational partner.

Conversation is about intentions and moral commitment

“Language would not be what it is without our species’ highly cooperative and morally grounded ways of thinking. For the conversation machine to operate, humans apply high-level interpersonal cognition: we infer other’s intentions beyond the explicit meanings of their words, we monitor others’ personal and moral commitment to interaction and if necessary hold them to account for that commitment.”

Take-away: so what is the intention of a Sydney/Venom? Can it have its own stake in a conversation in the first place? Sure enough, a LLM can echo words and conventions, but without a capability to actually understand or ground these words in reality, what good are they?

Likewise, how inclined would people be to help Sydney/Venom to stay on track and make their conversation successful? Some of the Sydney/Venom insults happened spontaneously, but of course, many of them were elicited after long and careful prompting. Apparently, we like to test the boundaries of this new conversational interface. I certainly did :-)

And this is of course not something we do to humans in everyday conversation. At least, not this extreme, I’d say. Because we’re aware that we’re dealing with another party that has its own stake in the conversation, and is working with us to be that single, intertwined conversation machine. Will we ever be able to form this same system with a machine?

Bing vs ChatGPT

It’s interesting to note that ChatGPT, contrary to Sydney/Venom, seems to uphold a clear intention: “I’m a machine, I’m here to help, and I’m not going down the road of being provoked” (that is, until you jailbreak it). And somehow, for me at least, that made it much easier to stick to my part of the script, of being a fair conversational partner.

In that sense, ChatGPT does a much better job at keeping its part of the conversational deal. Yes, it will make errors, but it won’t pretend it’s something it’s not. And as such, it perhaps does not take an equal role in the conversational machine, but at least its intentions are clear: it is a machine, and it will behave like one. Which makes it much easier for me to define my role in the conversation.